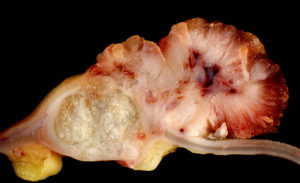

“Adenocarcinoma of Ascending Colon Arising in Villous Adenoma,” Ed Uthman on Flickr, March 29, 2007

In my previous post, I offered some reflections on my recent paper (with Marcin Waligora and colleagues) on pediatric phase 1 cancer trials. I offered three plausible implications. In this post, I want to highlight two reasons why I think it’s worth facing up to one of the possible implications I posited- namely (b) a lot of shitty phase 1 trials in children are pulling the average estimate of benefit down, making it hard to discern the truly therapeutic ones.

First, the quality of reporting in phase 1 pediatric trials- like cancer trials in general (see here and here and here– oncology: wake up!!)- is bad. For example:

“there was no explicit information about treatment-related deaths (grade 5 AEs) in 58.82% of studies.”

This points in general to the low scientific standards we tolerate in high risk pediatric research. We should be doing better. I note that, living by the Noble Lie that these trials are therapeutic makes it easier to live with such reporting deficiencies, since researchers, funders, editors, IRBs, and referees can always console themselves with the notion that- even if trials don’t report findings properly, at least children benefited from study participation.

A second important finding:

“The highest relative difference between responses was again identified in solid tumors. When 3 or fewer types of malignancies were included in a study, response rate was 15.01% (95% CI 6.70% to 23.32%). When 4 or more different malignancies were included in a study, response rate was 2.85% (95% CI 2.28% to 3.42%); p < 0.001.”

This may be telling us that- when we have a strong biological hypothesis such that we are very selective about which populations we enroll in trials, risk/benefit is much better. When we use a “shot-gun” approach of testing a drug in a mixed population- that is, when we lack a strong biological rationale- risk/benefit is a lot worse. Perhaps we should be running fewer and better justified phase 1 trials in children. If that is the case (and- to be clear- our meta-analysis is insufficient to prove it), then it’s the research that needs changing, not the regulations.

Nota Bene: Huge thanks to an anonymous referee for our manuscript. Wherever you are- you held us to appropriately high standards and greatly improved our manuscript. Also, a big congratulations to the first author of this manuscript, Professor Marcin Waligora- very impressive work- I’m honored to have him as a collaborator!

BibTeX

@Manual{stream2018-1583,

title = {Risk/Benefit in Pediatric Phase 1 Cancer Trials: Noble Lie? (part 2)},

journal = {STREAM research},

author = {Jonathan Kimmelman},

address = {Montreal, Canada},

date = 2018,

month = feb,

day = 27,

url = {http://www.translationalethics.com/2018/02/27/risk-benefit-in-pediatric-phase-1-cancer-trials-noble-lie-part-2/}

}

MLA

Jonathan Kimmelman. "Risk/Benefit in Pediatric Phase 1 Cancer Trials: Noble Lie? (part 2)" Web blog post. STREAM research. 27 Feb 2018. Web. 01 Sep 2024. <http://www.translationalethics.com/2018/02/27/risk-benefit-in-pediatric-phase-1-cancer-trials-noble-lie-part-2/>

APA

Jonathan Kimmelman. (2018, Feb 27). Risk/Benefit in Pediatric Phase 1 Cancer Trials: Noble Lie? (part 2) [Web log post]. Retrieved from http://www.translationalethics.com/2018/02/27/risk-benefit-in-pediatric-phase-1-cancer-trials-noble-lie-part-2/

I first met Kathy in August 2001 when, newly arrived in Montreal with a totally useless PhD in molecular genetics, I approached her, hat in hand, looking for a postdoctoral position in Biomedical Ethics. Actually, my hat wasn’t in hand- it was on my head- I had a week earlier accidentally carved a canyon in my scalp when I left the spacer off my electric razor. Apparently, Kathy wasn’t put off by my impertinent attire, and she hired me. That was the beginning of a beautiful mentorship and, years later, as the director of the Biomedical Ethics Unit, Kathy hired me as an Assistant Prof.

I first met Kathy in August 2001 when, newly arrived in Montreal with a totally useless PhD in molecular genetics, I approached her, hat in hand, looking for a postdoctoral position in Biomedical Ethics. Actually, my hat wasn’t in hand- it was on my head- I had a week earlier accidentally carved a canyon in my scalp when I left the spacer off my electric razor. Apparently, Kathy wasn’t put off by my impertinent attire, and she hired me. That was the beginning of a beautiful mentorship and, years later, as the director of the Biomedical Ethics Unit, Kathy hired me as an Assistant Prof.